Specification by Example

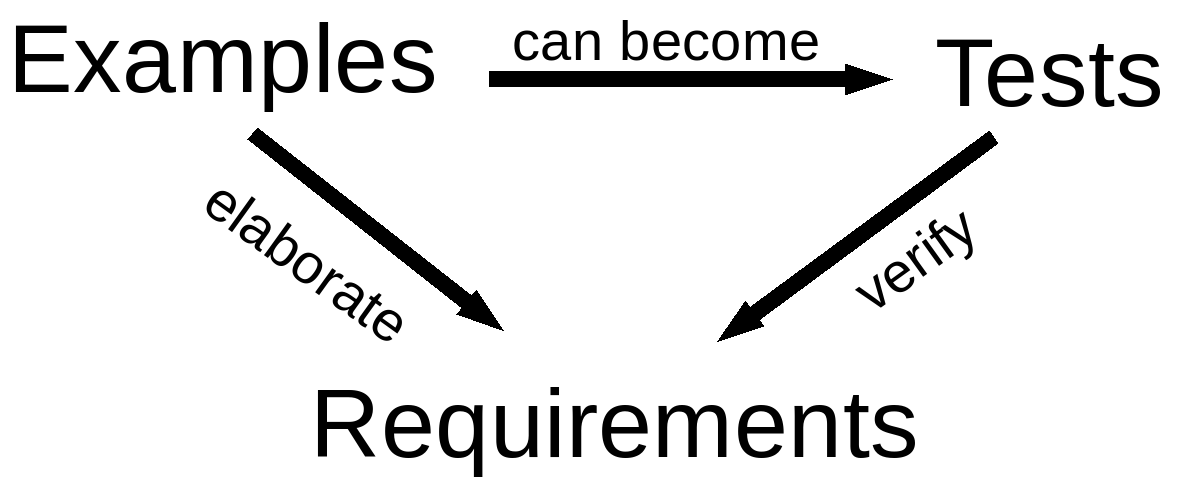

Specification by example (also called Acceptance-test driven development, ATDD) is a collaborative approach to defining requirements and business-oriented functional tests for software products based on capturing and illustrating requirements using realistic examples instead of abstract statements. With specification by example, requirements and tests become one, expressed as concrete, realistic examples.

Specification by example significantly reduces feedback loops in software development, leading to less rework, higher product quality, faster turnaround time for software changes and better alignment of activities of various roles involved in software development such as testers, analysts and developers.

A key aspect of specification by example is creating a single source of truth about required changes from all perspectives. When business analysts work on their own documents, software developers maintain their own documentation and testers maintain a separate set of functional tests, software delivery effectiveness is significantly reduced by the need to constantly coordinate and synchronise those different versions of truth. With Specification by example, different roles participate in creating a single source of truth that captures everyone’s understanding. Examples are used to provide clarity and precision, so that the same information can be used both as a specification and a business-oriented functional test. Any additional information discovered during development or delivery, such as clarification of functional gaps, missing or incomplete requirements or additional tests, is added to this single source of truth. As there is only one source of truth about the functionality, there is no need for coordination, translation and interpretation of knowledge inside the delivery cycle.

##Winning big with specification by example

Based on 50+ case studies of teams world-wide, Gojko identified 7 key patterns for winning big with specification by example, documented in his book. These process patterns are commonly used by teams that got great results out of their adoption of SBE:

###Deriving Scope From Goals

Many teams expect a customer, a product owner or a business user to come up with the scope of work before the implementation starts. Everything before that is often not considered as a problem in the domain of a software development team. Software delivery teams expect business users to specify exactly what they want, and then the teams will go and implement that. This is supposedly how the customers will be happy. In fact, this is where the issues with building the right product start. Implementation scope represents a solution to a business problem or a way to reach a business goal. By relying on customers to give us a list of user stories, use cases, or anything like that, we, in effect, ask them to design a solution. Business users are not software designers. If they define scope, the project does not benefit from the knowledge of the people in the delivery team. This often results in software that does what the customer asked for but does not deliver what they really wanted or is functionally incomplete.

Instead of requirements that are already a solution for an unknown problem, really successful teams derive scope from goals. They start with a business goal that the customer wants to achieve. They collaboratively define the scope that achieves that business goal, starting from the desired business effect. They allow the team to come up with a good solution together with the business users. The business users focus on communicating the intent of the desired feature–the value that they expect to get out of it. This helps everyone understand what is needed. The team can then suggest a solution that is cheaper, faster, easier to deliver or maintain than what the business users would come up with on their own.

###Specifying Collaboratively

If developers and testers are not engaged in designing the specifications, those specifications have to be separately communicated to the team. In practice, this leaves a lot of opportunities for misunderstanding and details being lost in translation. As a consequence, business users have to validate the software after delivery, and teams have to go back to change it if it fails validation. This is all unnecessary rework.

Instead of relying on any single person to get the specifications right in isolation, successful delivery teams engage in specifying the solution collaboratively with the business users. People coming from different backgrounds use different heuristics to solve problems and have different ideas. Technical experts know how the underlying infrastructure can be used better, or how emerging technologies can be applied. Testers know how the system is likely to break or where to look for potential issues, and these issues should be prevented from occurring in the first place. All this information needs to be captured as the specification of work.

Specifying Collaboratively enables us to harness the knowledge and experience of the whole team. It also creates a collective ownership of specifications, making everyone much more engaged in the delivery process.

###Illustrating using examples

Natural language is ambiguous and context dependent, so any requirements described in natural language are rarely complete. They simply don’t provide a full and unambiguous context for development or testing. Developers and testers have to interpret such requirements to produce software and test scripts, and different people might interpret tricky concepts differently. This is especially problematic when something seems obvious but we need domain expertise or knowledge of a particular jargon to understand it fully. Small differences in understanding will produce a large cumulative effect, often causing rework straight after delivery. This causes unnecessary delays. Instead of waiting for specifications to be made concrete for the first time in programming language code, successful teams make them concrete by illustrating using examples. They identify and record key examples, typical representative examples for all important classes of functions required to fulfill a specification. Developers and testers often suggest additional examples that illustrate edge cases or particularly problematic areas of the system. This flushes out functional gaps and inconsistencies and ensures that everyone involved has a shared understanding of what needs to be delivered, avoiding rework caused by misinterpretation and translation.

If the system works correctly for all the key examples, we know that it meets the specification we collaboratively agreed on. In fact, key examples effectively define what the software needs to do. They are both the target for development and an objective evaluation criterion to check whether the development is done. If the key examples are easy to understand and communicate, we can replace the requirements with the key examples.

##Refining the specification An open discussion during the collaboration on specifications builds a shared understanding of the domain, but the resulting examples often have a lot more detail than is really needed to illustrate a particular feature. Business users think about the system from the user interface perspective so they will offer examples of how something should work at the level of clicking on links and filling in fields. Using such verbose descriptions overconstrains the system and already specifies how something should be done rather than just what is to be done. Surplus details make the examples harder to communicate and understand. Key examples need to get cleaned up to be useful. By refining the specification from key examples, removing all the extraneous details, successful teams get a very concrete and precise context for development and testing. They define the target with enough detail to implement and verify it but without any additional information. They define what the software is supposed to do, not how it does it.

Refined examples can be used as an acceptance criterion for delivery. Development is not done until the system works correctly for all these examples. With some additional information to make key examples easier to understand, they make up a specification with examples, which is at the same time a specification of work, an acceptance test, and a future functional regression test.

###Automating validation without changing specifications Once we agree on specifications with examples and refine them, we can use them as a target for implementation and to validate the product. The system will be validated many times with these tests during development, to ensure that it meets the target. Running these checks manually would introduce unnecessary delays and the feedback from that would be very slow.

Quick feedback is an essential part of developing software in short iterations or in flow mode, so we need to make the process of validating the system cheap and efficient. An obvious solution to this is automation. However, this automation is conceptually different from the usual developer or tester automation. If we automate the validation of the key examples using traditional programming (unit) automation tools or traditional functional test automation tools, we risk introducing problems if things get lost in translation between the business specification and technical automation. Technically automated specifications will become inaccessible to business users. When the requirements change (and it is when, not if) or when developers or testers need further clarification, we won’t be able to just use the specification that we previously automated. We could keep the key examples also in a more readable form, such as Word documents or web pages but, as soon as there is more than one version of truth, we’ll have synchronization issues. That is why big paper documentation is never completely correct.

To get the most out of key examples, successful teams automate the validation without changing the information. They keep everything in the specification literally the same when automating, without any risk of mistranslation issues. As they automate validation without changing specifications, the key examples stay almost in the form they were in on a whiteboard–human-readable and accessible to all team members.

An automated specification with examples, still in a human-readable form and easily accessible to all team members, becomes an executable specification. We can use it as a target for development and easily check if the system does what we agreed. We can use that same document to get clarification from business users. If we need to change the specification, we have to do it in one place only.

###Validating frequently

In order to efficiently support a software system, we have to know what it does and why. In many cases, the only way to do this is to drill down into programming code or find someone who can do that for you, if you aren’t a hieroglyphics expert. Code is often the only thing we can really trust. Most written documentation is out of date even before the project is delivered. Programmers become oracles of knowledge and bottlenecks of information. Executable specifications can easily be validated against the system to check if it still does what it was supposed to do. If this validation is frequent, then we could have as much confidence in the executable specifications as we have in the code.

By checking all their executable specifications frequently, the teams quickly discover any differences between the system and the specifications. Because their executable specifications are easy to understand, the teams can discuss the changes with their business users and decide how to address the problems. They constantly keep their systems and executable specifications in sync.

###Evolving a documentation system The most successful teams are not satisfied with just a set of frequently validated executable specifications. They ensure that the specifications are well organized, easy to find and access, and consistent. As their project evolves, the team’s understanding of the domain changes. Market opportunities cause changes to the business domain models. The teams that get the most out of Specification by Example update the specifications to reflect those changes, evolving a living documentation system. A living documentation replaces all the artifacts that teams need for delivering the right product but in a way that fits nicely with short iterative or flow processes.

Living documentation is a reliable and authoritative source of information on system functionality, which anyone can easily access. It is as reliable as the code, but much easier to read and understand. Support staff can use it to find out what the system does and why. Developers can use it as a target for development. Testers can use it for testing. Business analysts can use it as a starting point when analyzing the impact of a requested change of functionality. And it gives us regression testing for free.

Living documentation is the end-product of Specification by Example. To create a living documentation system, many teams have ended up designing a domain specific language for specifications and tests. They structured their specifications around that language to keep them consistent and easy to understand and to minimize long-term maintenance costs. When retrofitting executable specifications into already existing products, teams often had to make the system and the architecture more testable, which required senior people to plan and implement serious design changes.

##How we can help you?

We can provide professional training, mentoring and strategic consulting to help you get started with specification by example and adopt it successfully.

You can also count on us to facilitate specification workshops, work with your team to integrate popular automation tools into your environment, help team members prepare for specification workshops and scale this process across a larger group

##Why us?

By engaging us to help with specification by example, you are coming directly to the source. We are early adopters and pioneers in this field, significantly influencing the practice and helping to define it by publishing several seminal books on the topic. Gojko’s book Specification by Example won the 2012 Jolt Award for the best book and the #2 spot on the top 100 agile books for 2012. We trained more than 7000 people in the field.